June 29, 2017

Introduction

The positive impact of Response to Intervention (RTI) over the past 40 years, plus the recent emphasis on social-emotional aspects of learning, has made it possible to address the academic, social, emotional, and developmental needs of all learners by aligning empirical data, resources, and support. In other words, Multi-Tiered System of Supports (MTSS) works. Within the MTSS model, RTI continues to capture the best use of data-fueled insights to support learners. The pillars of RTI—universal screening, proven interventions, and monitoring progress toward goals—remain constant. In this blog post, we explore fresh ways to think about screening and progress monitoring.

Screening as a barometer

Traditionally, RTI screening data were primarily used as one of multiple measures to identify students in need of additional support. Screening operated somewhat like radar, pinpointing each student’s distance from a location—in this case, the benchmark.

What if, instead, we thought about screening as a barometer? Just as a barometer measures the force exerted by the atmosphere, universal screening measures the pressure exerted on core instruction by the atmosphere surrounding the benchmark. If the benchmark lacks rigor, we may fail to identify students on the cusp of challenge; too rigorous, we may lack resources to support all identified students. As VanDerHeyden et. al. (2016) write, “trying to provide intervention to more than 20 percent of students rapidly overwhelms the system’s resources,” increasing the pressure on core instruction.

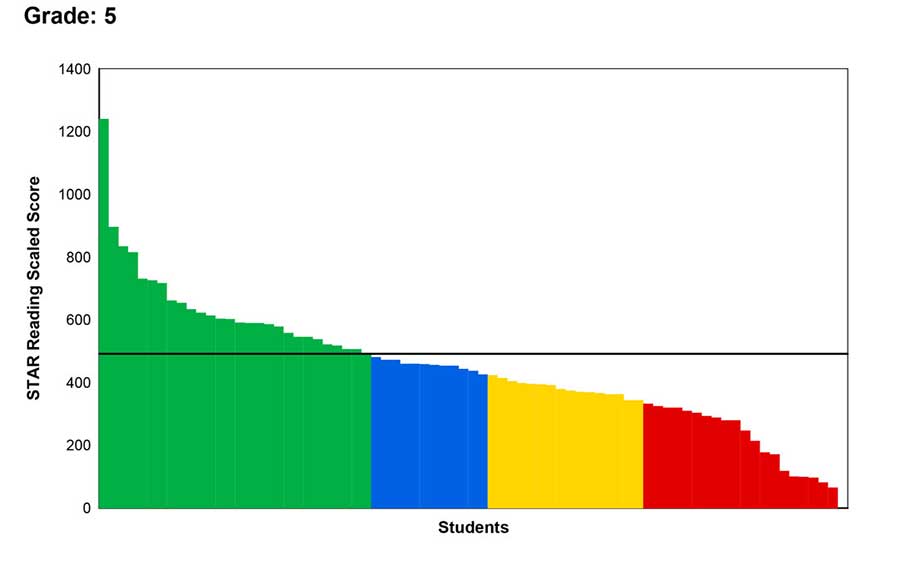

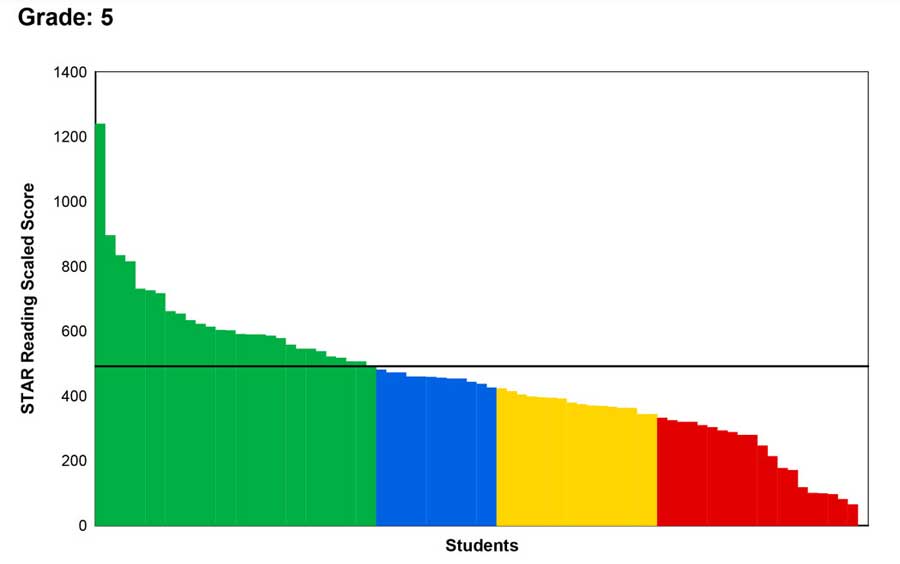

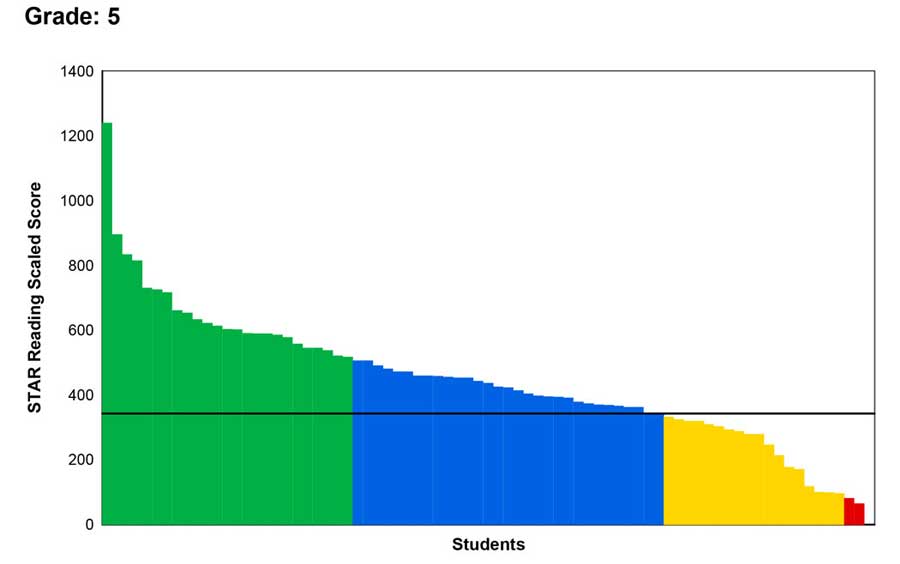

Educators using Renaissance Star 360® for screening can, and often do, customize the benchmark to reflect the districts’ goals. The three images displayed below reflect data from the same screening event. Note that the shape of the data is identical in all three screening reports. The first two reports reflect benchmarks at the 40th and 50th PR. The third report reflects student data within the atmosphere of the proficiency benchmark for the state summative exam.

WORM

At a recent meeting focused on Star screening data, Richard Slade, Head Teacher for Plumcroft Primary School in the UK, shared the concept of “Write Once Read Many” (WORM). Write once—generate data via screening. Read many—use screening data for multiple purposes. Think about viewing data through both the RTI and state proficiency lenses. For the purposes of informing intervention decisions, view the data via an established, consistent benchmark. This helps determine how many students you can comfortably serve in Tiers 2 and 3. Then, view the data again through the state proficiency lens as a barometer to check the strength of core instruction and its impact on each student.

If working with state proficiency, keep in mind that despite the efforts of the two assessment consortia, proficiency cut scores vary significantly from state to state, and even among assessed grade levels within a state. It is not uncommon to see proficiency around the 80th PR, which raises questions about the wisdom of setting such a high standard to inform intervention decisions. “Read many” with data reported in relation to the state proficiency benchmark is perhaps most effective in informing core instructional decisions.

Sound goals and progress monitoring

“Intervening without consideration for what a student specifically needs is like choosing an antibiotic without identifying the bacteria causing an infection,” VanDerHeyden et. al. (2016) write. Star screening data highlights the potential need for intervention and provides insights to specify what that student must gain during intervention.

Keep in mind that goal setting is about the development of the student and the effectiveness of the intervention. Essentially, you set a goal for the student and for the intervention. Goals need adequate time and enough test administrations to ensure that you make a sound decision. Traditionally, Tier 2 interventions were delivered and decisions made about their effectiveness in 6–8 weeks, but there is little empirical evidence to support that practice (Shapiro, 2013).

Psychometrically, we need to make sure we have allowed time for the student to develop, and assessed often enough, over a long enough period, to make progress-monitoring decisions with confidence. These “long enough periods” are determined by grade spans, for example:

- In the primary grades, educators should allow a minimum duration of time in intervention and progress monitoring of 8–12 weeks.

- For intermediate grades (3rd–5th), allow 12–18 weeks at a minimum.

- At the secondary level (6th–high school), a minimum of 18+ weeks could be required to observe and understand lasting changes in academic progress.

During the weeks of intervention, Star 360 is administered at least five times to reach psychometrically sound progress monitoring decisions. The aim is to balance the need for quality data with a need to protect instructional time and make the right decision about the student.