April 30, 2020

Should we be assessing our students during this time of school closures?

Because Renaissance is one of the nation’s leading K–12 assessment providers, it was inevitable that we would receive this question during the spring of 2020. With school buildings shuttered but learning activities continuing, many school and district leaders have reached out to us to ask whether Star Assessments can be administered remotely.

From a technical perspective, the answer is: Absolutely. With appropriate internet protocol (IP) restrictions lifted, Star Assessments can be administered in a remote learning environment. The more nuanced considerations, however, are why schools would or would not want to administer the tests remotely, and how the results from a remote administration might be impacted.

Let’s begin with the reasons why some schools have considered remote administration of Star. During regular school operations, data from Star is used for a variety of purposes, including formal progress monitoring of interventions and program placement. With the dropping of most federal- and state-mandated testing requirements for spring 2020, many of these considerations have fallen by the wayside—yet many educators still have a fear of missing out on something. They ask, in particular, about scores like Student Growth Percentile (SGP) and specific Star reports they rely on that require that tests be taken within certain time windows.

What would a school gain from testing remotely this spring? With a spring test administration, schools would have the following for students:

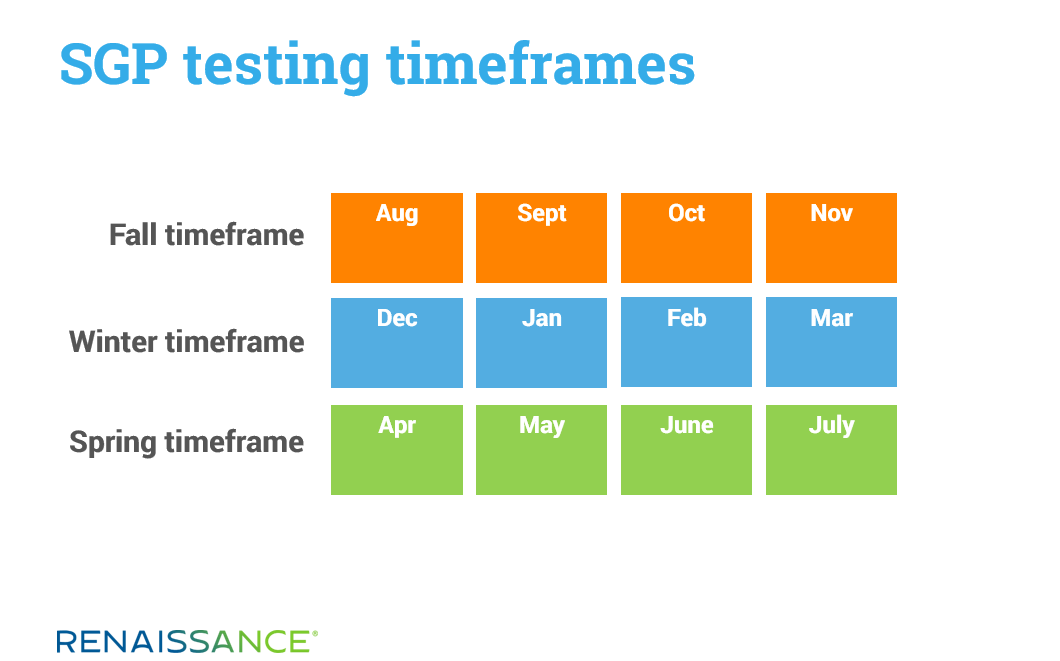

Winter to Spring and Fall to Spring SGP scores. As growth scores based on detailed calculations, SGPs require tests to be administered within specific timeframes, as shown in the graphic below. If no tests occur in the Spring timeframe, generating Winter to Spring and Fall to Spring SGP scores becomes impossible. (A potential workaround, however, is discussed below.)

Forecasts of high-stakes test performance. Educators use Star to forecast student proficiency on a variety of high-stakes tests—including state summative tests, the ACT, and the SAT. These projections can be generated at any point during the school year, but if a Star test occurs, say, six months before the state summative test date, Star can only make a projection by assuming typical student growth over the six months until the high-stakes test is administered.

Many educators prefer to have a Star test administration much closer to the date when the high-stakes test would have occurred, minimizing the need of any statistical projections of typical student growth.

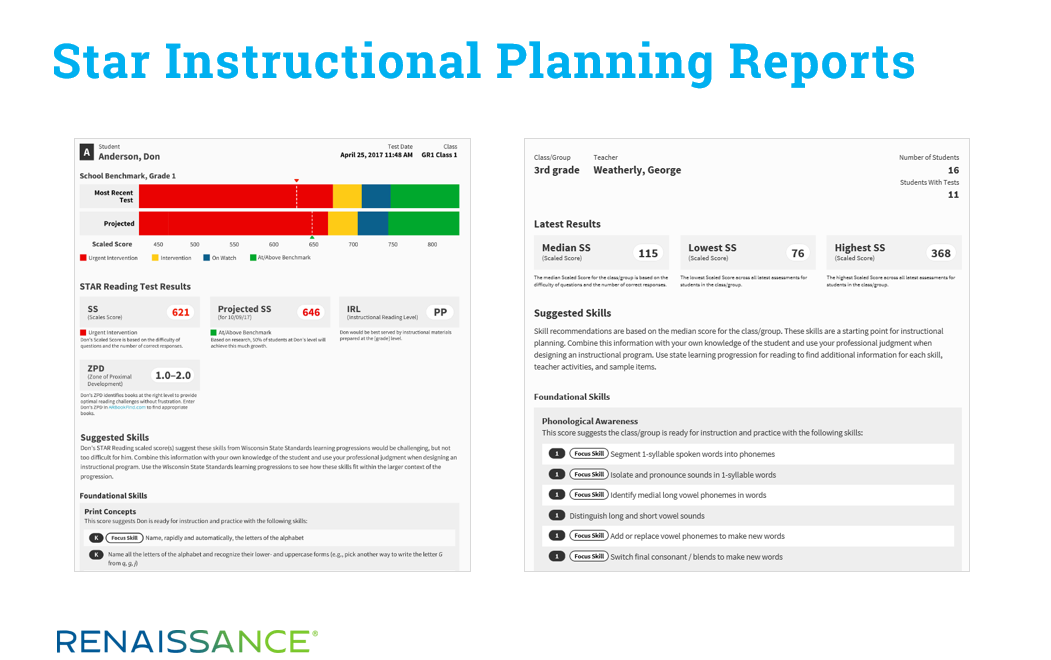

Instructional planning information. Once a Star test is administered, educators can access detailed reports showing the specific skills that individual students—as well as groups and entire classes—are ready to learn next.

However, instructional planning information based on a test that was administered yesterday is one thing; information based on a test that was administered three months ago is something very different. When there is a gap between the testing date and the request for instructional planning information, Star assumes typical growth and adjusts the recommendations accordingly.

To the extent that what is occurring with your students now is or is not “typical,” instructional planning recommendations based on a Star test given months ago risk becoming a bit stale.

Now that we’ve reviewed these points, it’s worth pointing out that the opposite is also true: If schools do not administer Star tests during the spring timeframe (April 1–July 31), they risk missing out on the above information.

Knowing that SGP scores are of particular interest to many educators, we will note that if your students tested in the regular Fall and Winter windows, you will have a Fall to Winter SGP for 2019–2020: in other words, an SGP score for the first half of the school year, which was fairly typical.

Then, when students return to school this fall, you will be provided with a Fall to Fall SGP that will depict growth across the full academic year and the summer. Comparing the fairly normal period (Fall to Winter) to the whole, disrupted one (Fall 2019 to Fall 2020) will provide some insight on the extent to which student growth was impacted by school closures.

Of course, to ensure all key metrics are available to you in the fall, it is advisable to save hard copies now of key Star reports that list SGPs. You’ll find helpful information on this topic, including a list of the relevant Star reports, by clicking here.

Additional remote testing considerations

Beyond the functional nuances of Star discussed above, we must also consider current conditions. Most schools have rarely—if ever—administered Star Assessments remotely, so the following key questions come into play:

- Will all students have access to a testing environment that will be controlled and free of distractions?

- Do all students have internet connectivity and access to a device that meets the technical requirements for administering a Star test?

- Have you consulted all stakeholders who need to be involved in the decision-making process regarding testing students remotely?

- Do you have processes in place to ensure fidelity of testing in a remote setting?

These and other considerations are covered in our remote testing guide for administrators, but the ultimate question may be this: Does the benefit of having spring assessment data outweigh the time and effort needed to administer the test with fidelity?

Finally, any consideration of remote testing must also acknowledge that, given these atypical testing conditions, there is likely to be greater variability in test results. Some students will inevitably test with distractions, and others will receive more outside assistance than appropriate. While scores from a remote administration can be an informative data point, we should not interpret them too rigidly through the lens of traditional norms. Norms suggest controlled conditions and, clearly, much of education has been disrupted this spring.

Conversations about whether to test remotely are not unique to Renaissance or to Star Assessments. Every assessment provider from preschool through university and professional levels is similarly advising its customers. Some have even gone so far as to say that their assessments are absolutely not available for remote administration. At Renaissance, we believe that the decision of whether to test is one that belongs with the school and district administrators who are using Star. You know your specific needs and your capabilities far better than we do, and we stand ready to support you with advice and resources to support your successful use of Star Assessments, no matter which decision you ultimately make.