September 4, 2014

Lately, I’ve been hosting Thanksgiving at my house. I’ve found that family members frequently request a favorite side dish, want to try a new recipe, or even offer to help out in the kitchen. I’m open to trying something new, and I like the idea of making Thanksgiving dinner a group effort. That said, fun ideas and helping hands can quickly become a bit of a logistical nightmare as schedules change last minute, ingredients go missing, baking times don’t align, and that casserole Martha Stewart made look so easy turns out barely edible.

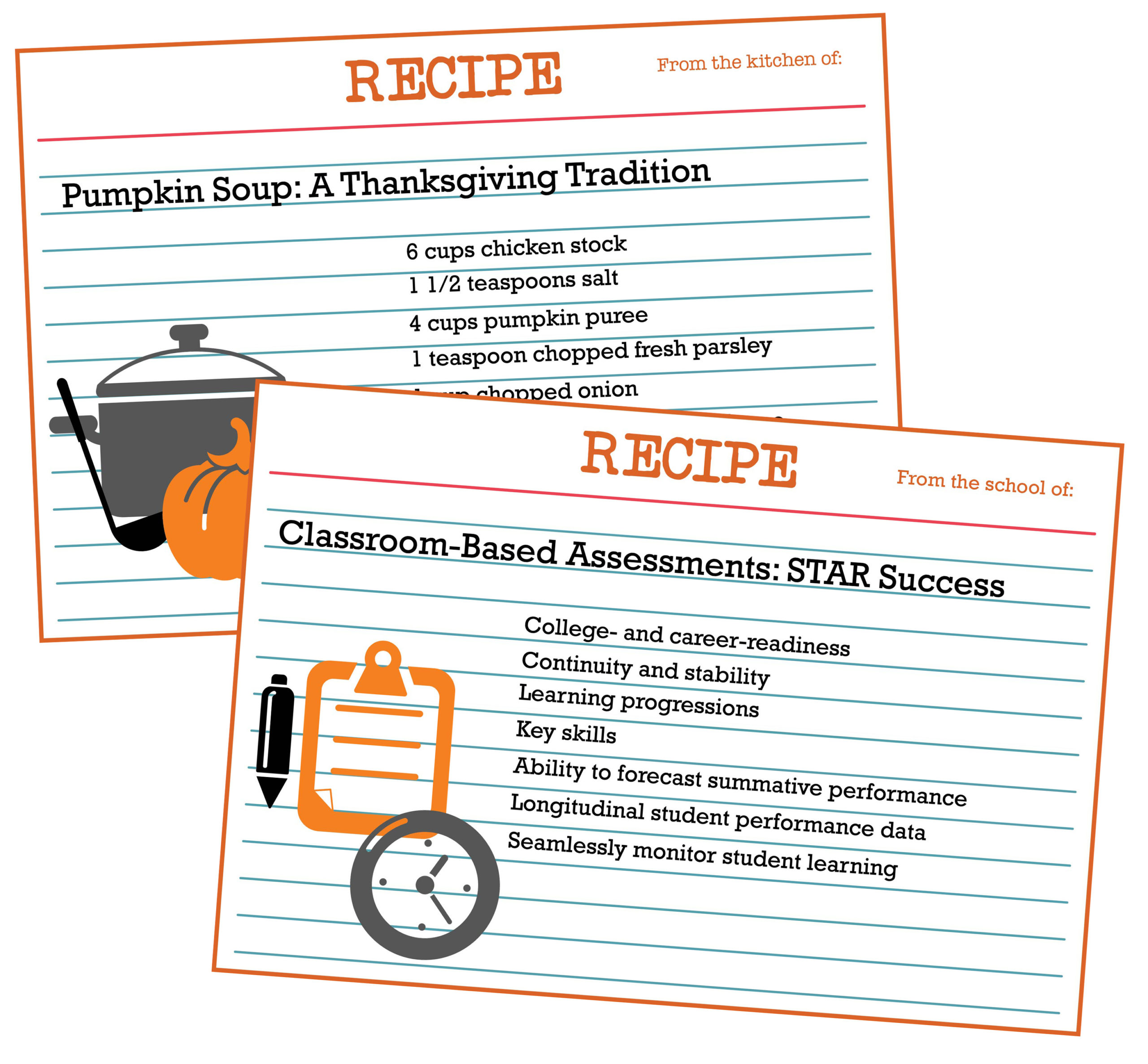

That’s why I always have a couple of tried-and-true recipes on hand. You know, the ones that are quick, easy, tasty, and make enough to feed an army. In a way, this new assessment era is a lot like my Thanksgiving gatherings, and some of the same lessons might apply.

Of course, the stresses of a typical Thanksgiving dinner are nothing compared to those facing educators today. When the Smarter Balanced and PARCC multi-state assessment consortia first introduced plans for their tests, new college- and career-ready standards were generally well received. The 2014–15 school year—when the new tests were to be implemented—seemed a long way away. As the deadline draws near, however, tensions are rising. There’s a chorus of concerns about Common Core. Critics point to widespread budget woes and question whether new high-stakes tests will deliver what they promise.

As a result, states’ assessment plans are changing by the week. About four out of five states are either adopting an entirely new summative test or making substantial changes to their existing one. Much like Thanksgiving at my house, a lot of last-minute changes and late-breaking plans are colliding to form a logistical nightmare.

In the upcoming year, it may be difficult to anticipate whether students are likely to meet new benchmarks, and the number of students reaching proficiency will likely decrease as a result of more rigorous assessments. During this time of stress and uncertainty, it’s nice to have some trusted “recipes” to fall back on, such as the tried-and-true classroom-based assessments many schools are already using for monitoring student progress and informing instructional decisions.<

Classroom assessments with certain characteristics can help educators manage the transition to new summative assessments in some important ways:

Continuity and stability. Classroom assessments that report student performance across years on a single longitudinal scale can provide continuity over time. This contributes to the body of evidence educators need when making decisions about whether students are on track for college and career readiness. Longitudinal assessments also help lend stability, as parents and educators try to understand whether student performance is actually declining, remaining stable, or improving. Shifts in summative assessments are going to result in a break in longitudinal student performance data; classroom assessments will be an important component to bridging that gap and continuing to seamlessly monitor student learning.

Skill-specific information. Even though standards and proficiency benchmarks are changing for many, learning progressions and other tools available in some classroom assessments show how students are developing with regard to key skills. For example, Renaissance Star Assessments® report student performance on a learning progression and summarize mastery of state standards. This allows educators to monitor progress with classroom-based assessments that focus on the same skills and standards emphasized by their summative assessments.

Ability to forecast summative performance—when linking studies can be completed. In the past, linking studies have made it possible for educators to use brief classroom assessments given throughout the year to estimate end-of-year summative assessment performance. It’s important to understand that linking studies require performance data from both tests (e.g., the classroom-based assessment and the new summative test). For this reason, no such studies can be conducted to link any existing test to the new summative assessments until scores are available from the 2014–2015 school year. Educators and other experts who will be setting proficiency cut points on PARCC and Smarter Balanced assessments will be using data from existing tests such as the National Assessment of Educational Progress (NAEP), among other information, to guide their decisions. NAEP and other widely used summative tests set proficiency cut points at a level that about 30 to 40 percent of students can reach. In other words, the 60th or 70th percentile. Until linking studies can be conducted, educators may want to use percentiles similar to those used by influential existing assessments such as NAEP to gauge whether students are likely to achieve proficiency.

Tried and true culinary favorites have helped me to navigate Thanksgiving festivities and focus on what’s important—enjoying a meal with friends and family. Similarly, familiar and reliable classroom-based assessments can help educators in this time of transition, so they can focus on what’s important—student learning.

Learn more

To learn more about the power of Renaissance Star Assessments, click the button below.